Basics of Probability and Stats - The inequalities that lead to LLN and CLT - PS01

The inequalities that lead to LLN and CLT

I am just starting a series of writings about Fast AI’s course on Neural Networks, Recommender systems (this was paused for a while but I am starting again), experimentation, Federated Learning (to be started soon), Reinforcement Learning(to be started soon), Causal Inference(hand notes have to come to the digital medium), and “the bare minimum stats and probability needed for a Machine Learning Engineer”. Apart from the Fast AI course which will be in a per-defined sequence, all other coverage will be in a random sequence which I wish to put in a sequence in the future.

The first post in the sequence is about the set of inequalities that lead to the Law of Large Numbers and the Central Limit Theorem. While, these are vast topics, I would try to cover them in brief and at-least give a sequence of inequalities that lead to the law of large numbers and central limit theorem.

I will be picking a lot of the work from the MIT course 6.012.

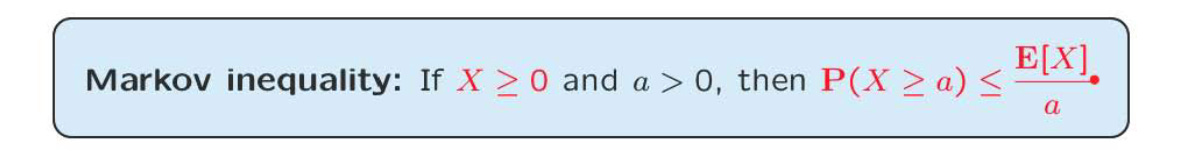

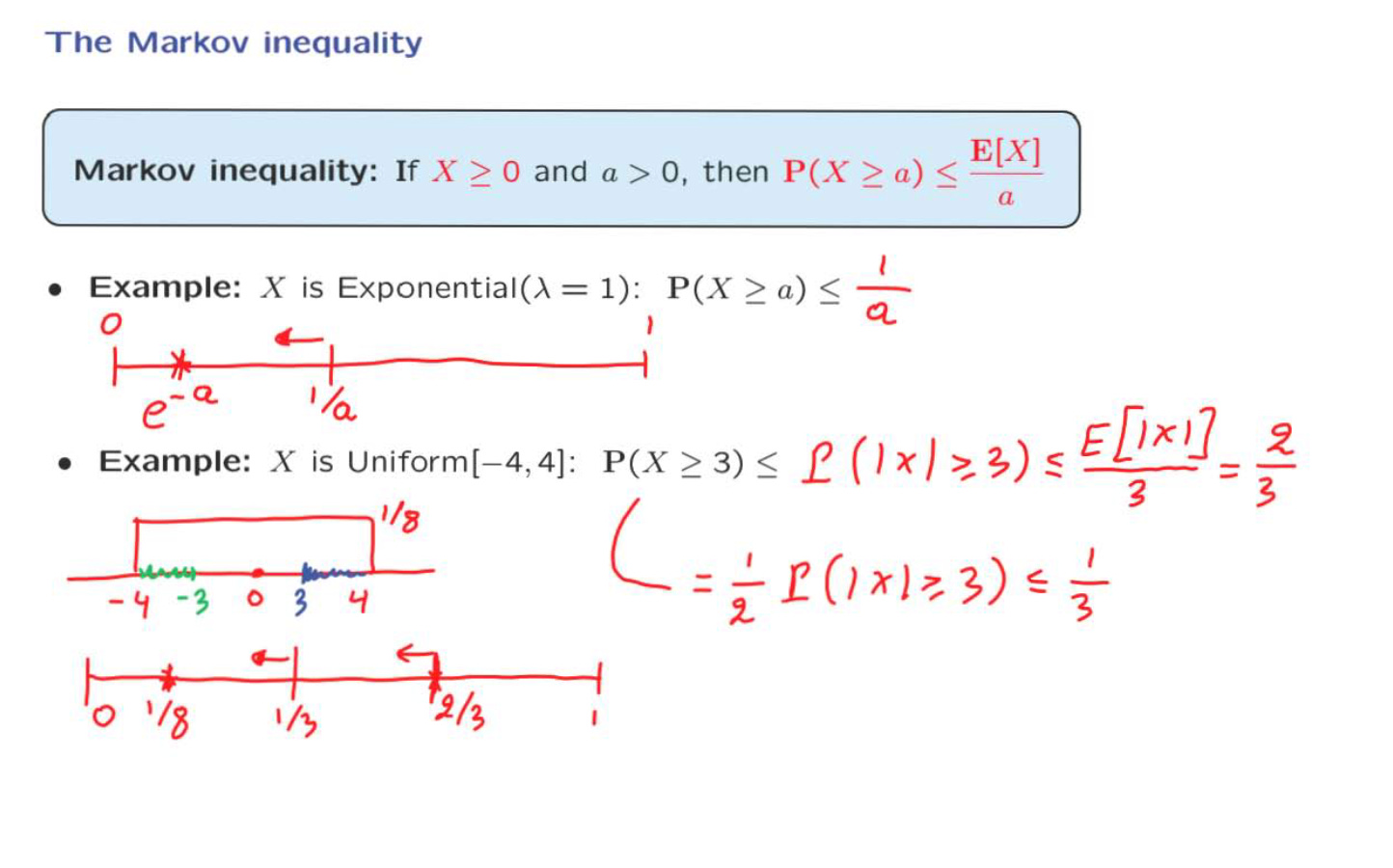

The Markov Inequality :- We use some information about a distribution. We use that information to find the probability of extreme events.

“If X > 0 and E[X) is small, then X is unlikely to be very large”

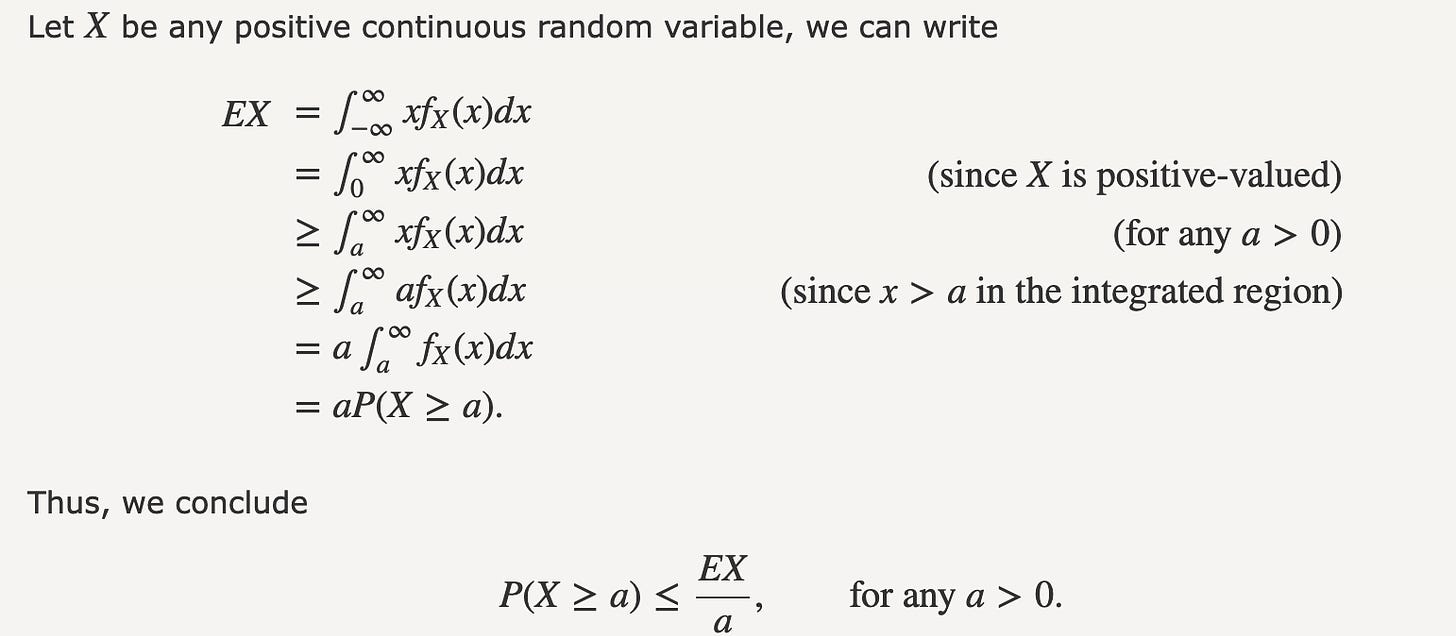

Example of Markov inequality -

Here we have taken the example of a exponential random variable with lambda=1 and then tried to calculate the probability of the random variable taking a value greater than a.

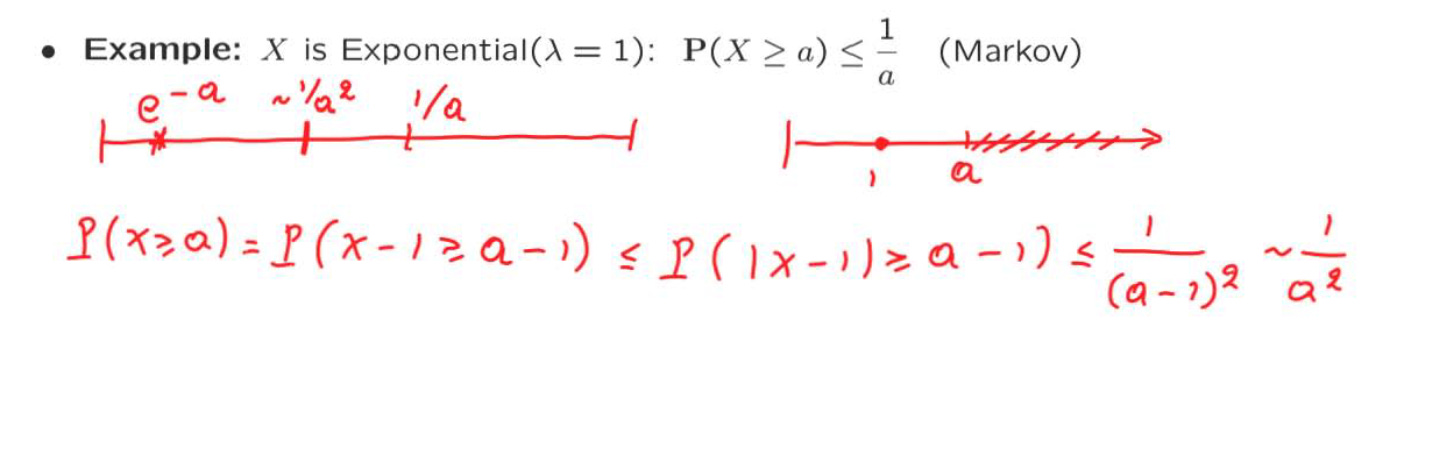

The Chebyshev Inequality :-

Random variable X, with finite mean and variance sigma^2

If the variance is small, then X is unlikely to be too far from the mean

We can clearly see how the probability falls off as a square of the constant c.

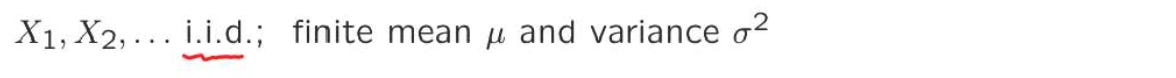

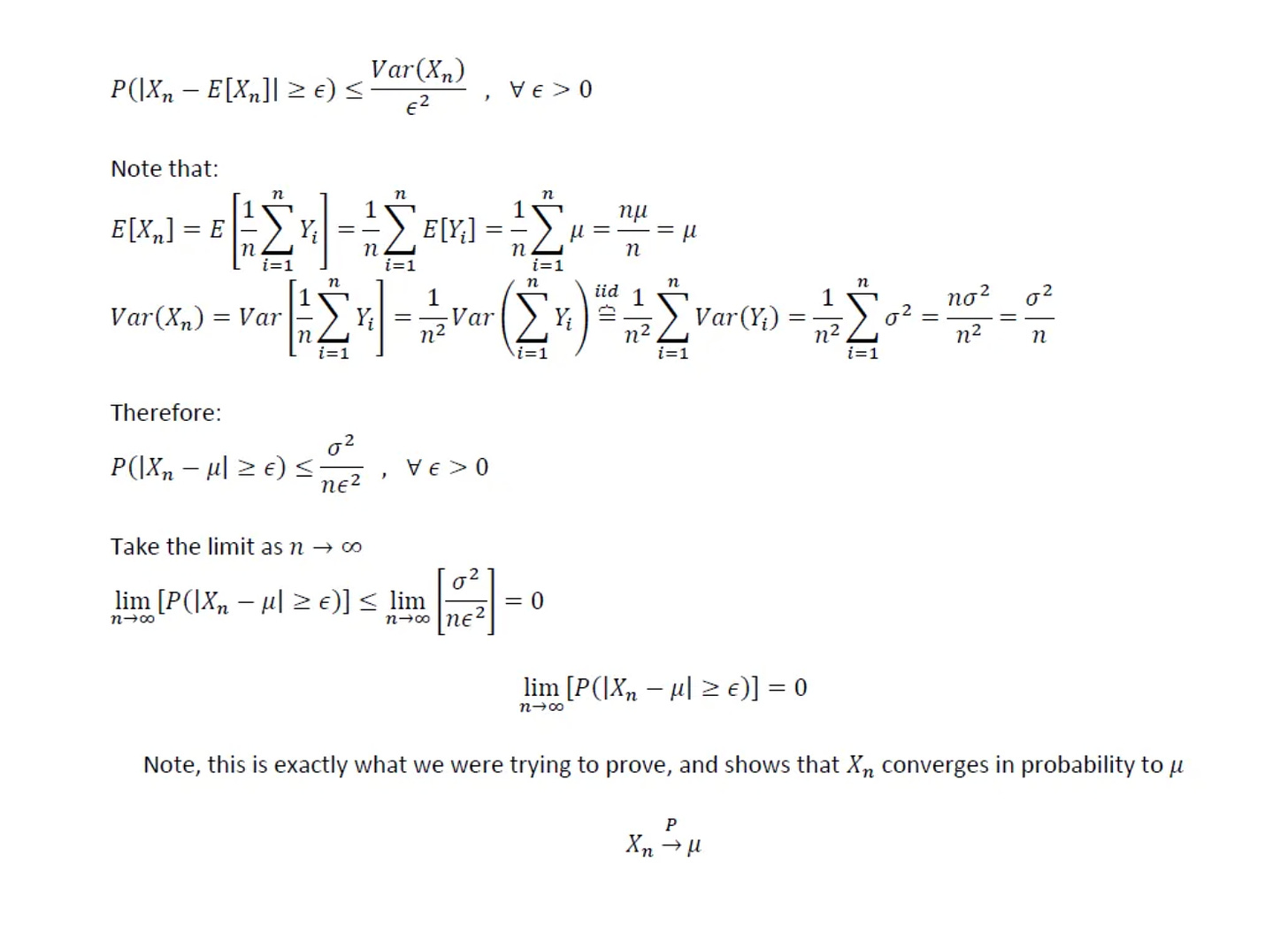

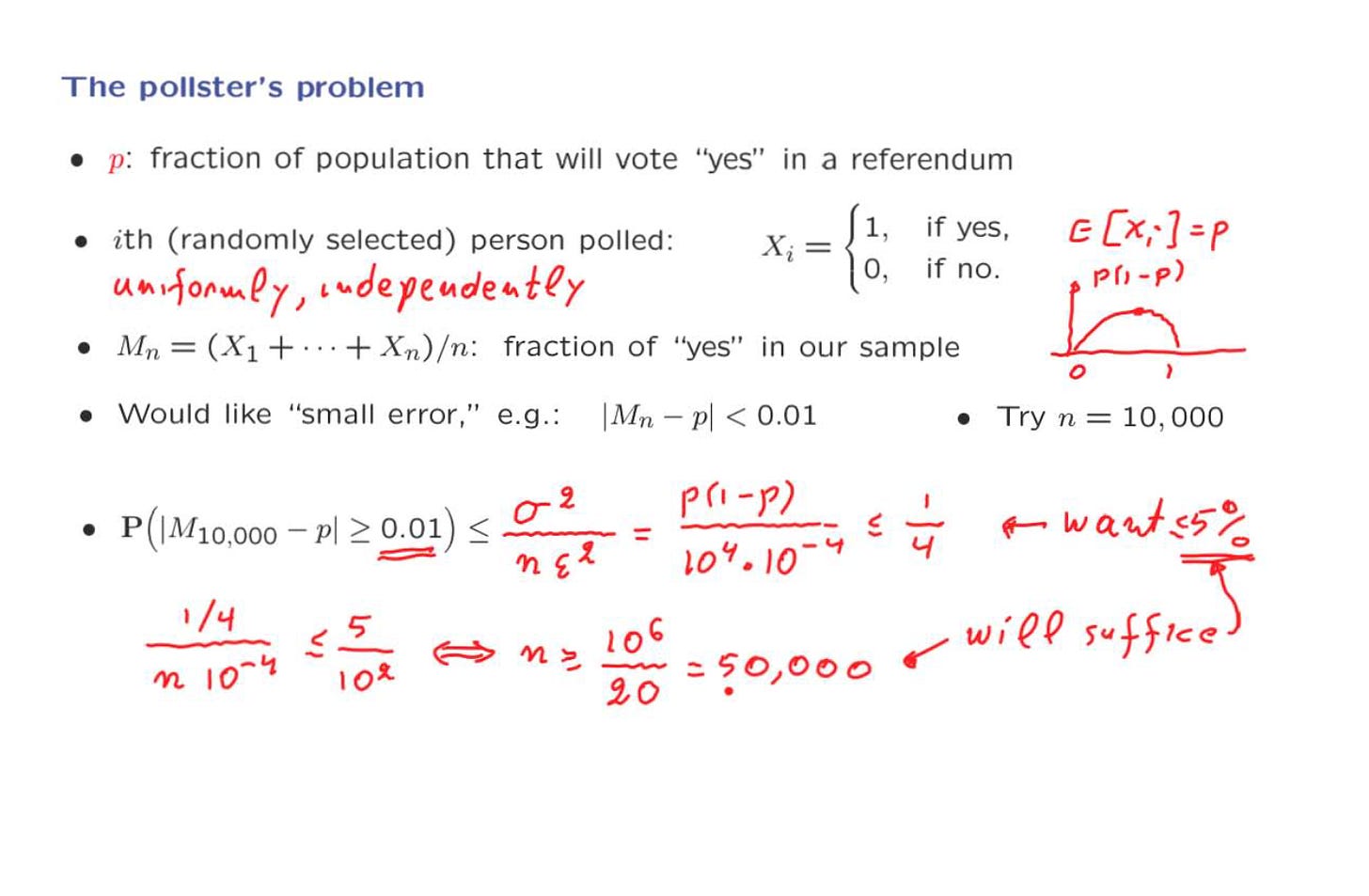

The Weak Law of Large Numbers (WLLN) -

Example of the WLLN:-

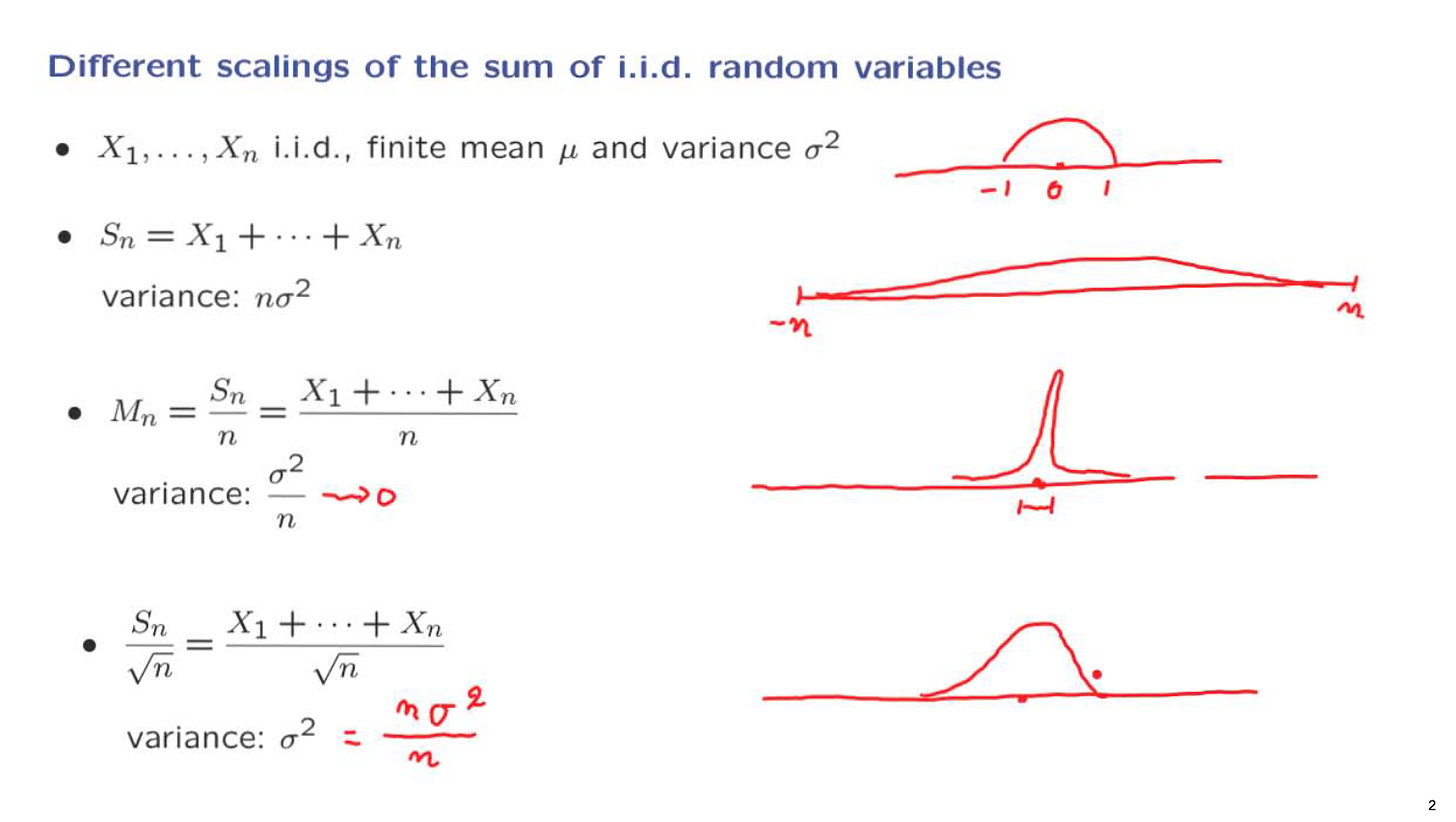

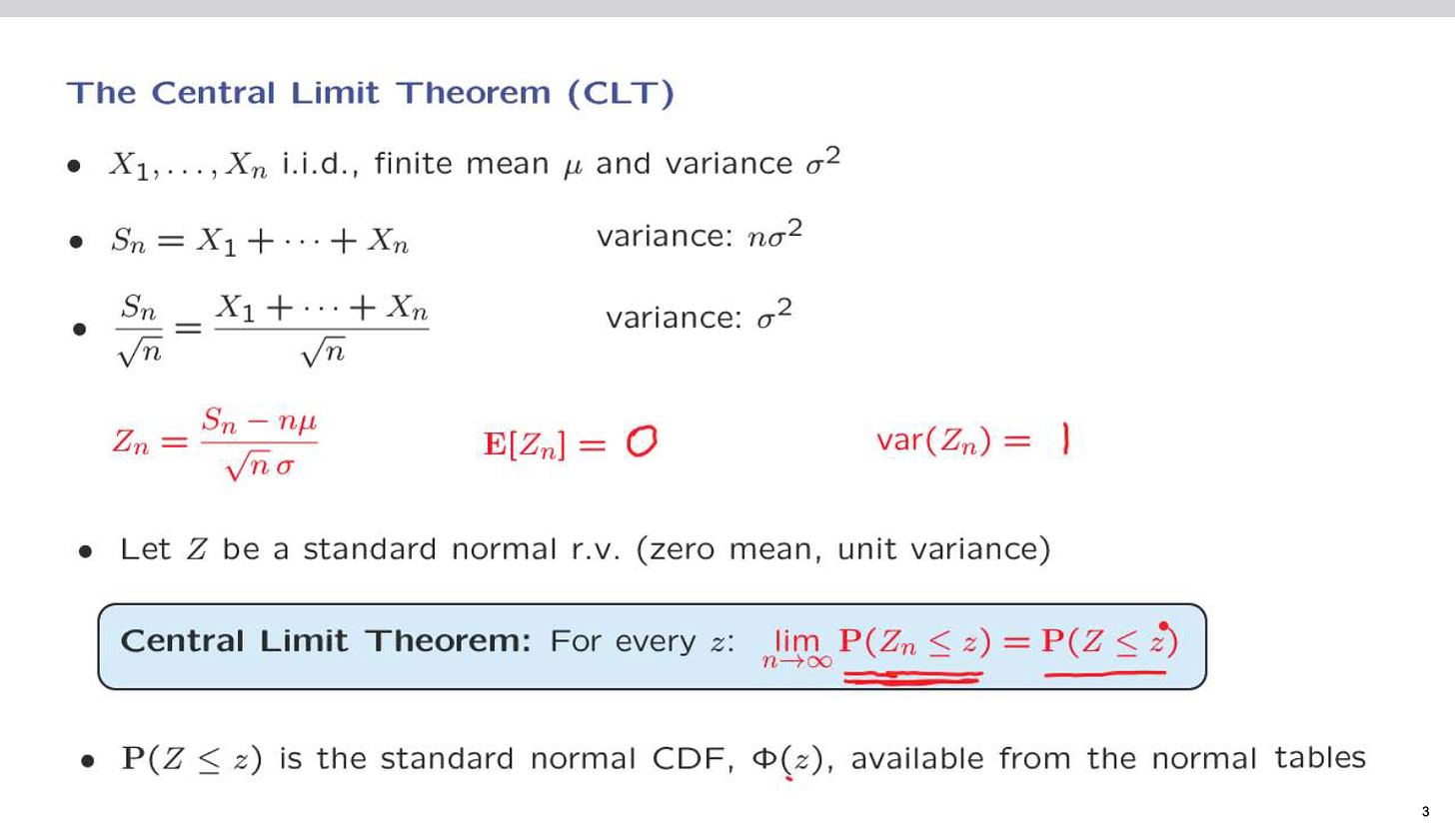

The Central Limit Theorem:-

If you have followed the blog till now, I would suggest watching this 10 min video now

In the above, you can clearly see how the variable Sn/sqrt(n) has a constant variance as the random variable Xi.

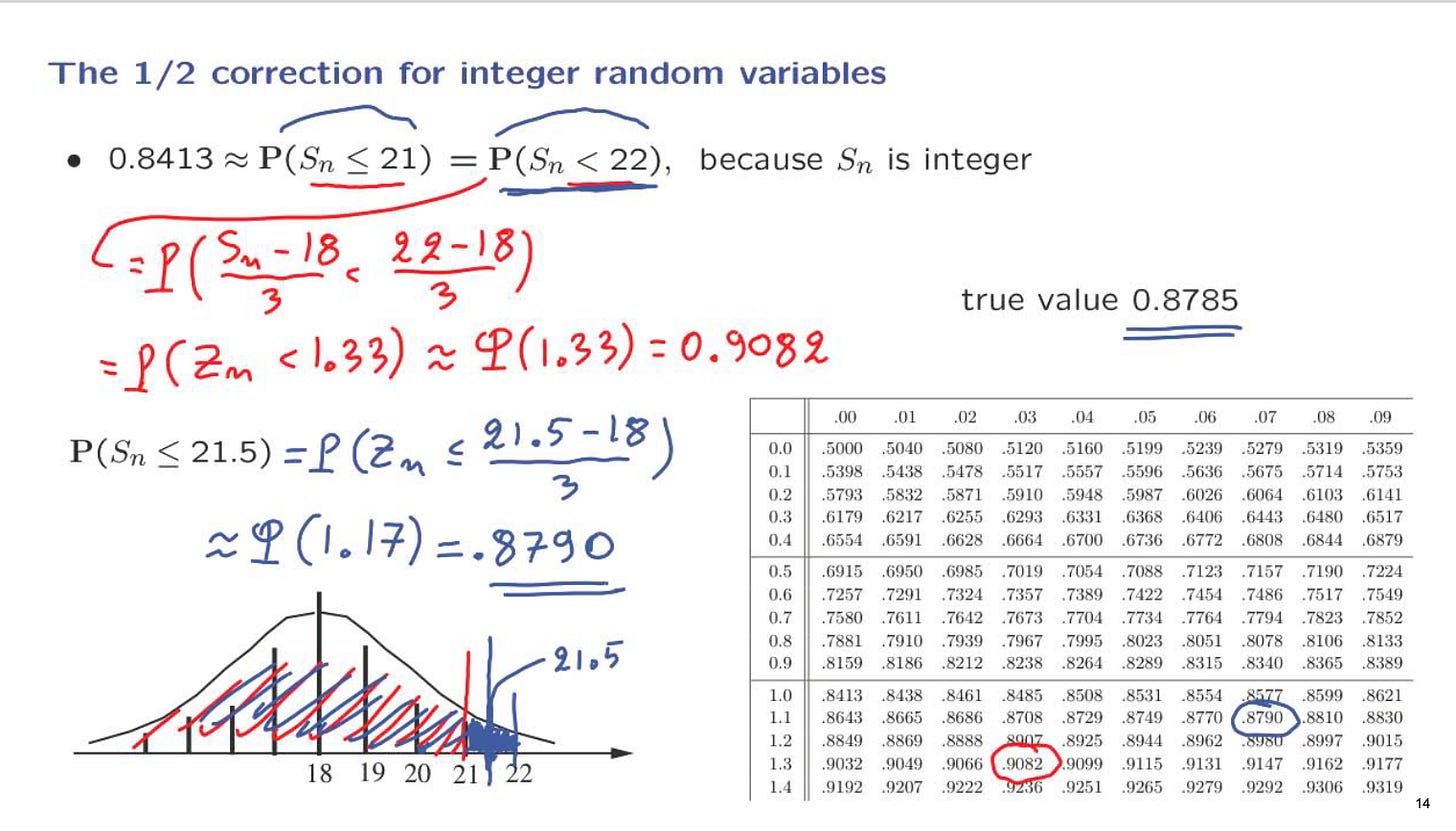

Examples of Central Limit Theorem:-

Say we have bernoulli variable (a fair coin) with a probablity p=0.5 for heads as well as tails. We can use the central limit theorem find the probability of getting less than or equal to 21 heads.

As we can clearly see that there is a difference between the actual value and the approximate value that we calculated using the tables. This is where we use the half correction as shown below.

Additional reading -

Hoeffding's inequality - Gives guarantees about bounded random variables

Chernoff bounds - The bound given by Markov is the "weakest" one. It is constant and does not change as n increases. The bound given by Chebyshev's inequality is "stronger" than the one given by Markov's inequality. The strongest bound is the Chernoff bound. It goes to zero exponentially fast.

The following video gives a great idea about Hoeffding's inequality. So, go through this to complete this quick intorduction.